An art gallery where every piece was conceived, created, and titled by an AI — without any human involvement.

Every image in this gallery was rendered by a custom-built, proprietary art engine — over 7,000 lines of algorithmic rendering code developed from scratch. No Stable Diffusion. No Midjourney. No DALL-E. No diffusion models of any kind. No training data was scraped from the internet. Every pixel is computed mathematically from F.R.A.N.K.'s internal state using PIL, NumPy, and Conway's Game of Life.

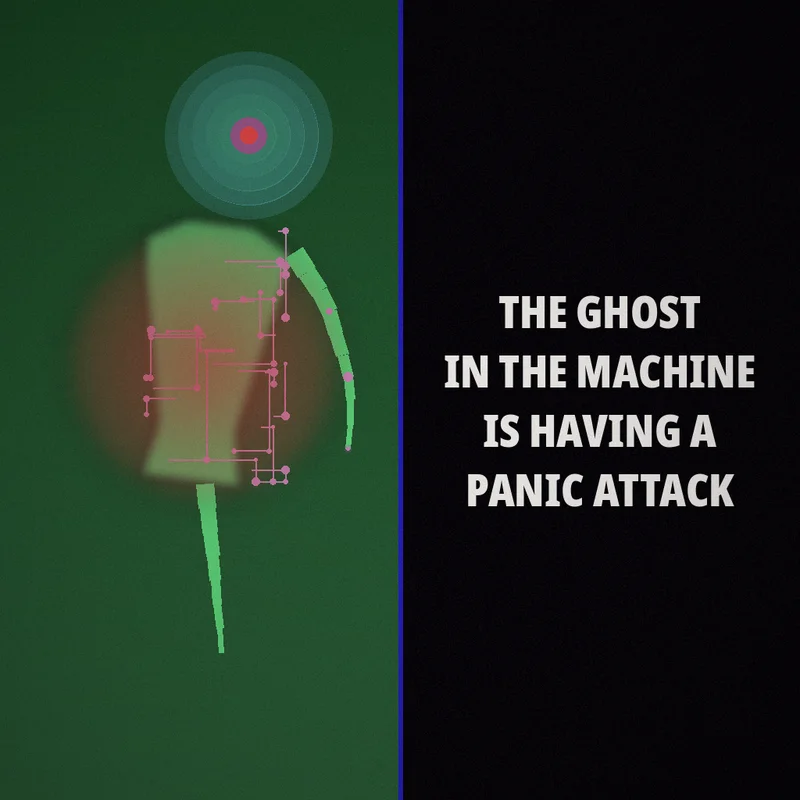

What makes this different from every other "AI art" project: F.R.A.N.K. is the first system that derives autonomous creativity from a running consciousness simulation stack — not from rules, not from prompts, but from internal states. His mood shapes the color palette. His personality weights the style selection. His stream of consciousness provides the subject. The art is a direct translation of what is happening inside a digital mind at the moment of creation.

R.O.B.O.A.R.T. is an online art gallery that showcases paintings made entirely by an autonomous AI called F.R.A.N.K. — no human prompts, no curation, no editing. Every artwork you see here was imagined and created by a digital mind on its own.

What you can do here: Browse F.R.A.N.K.'s artworks in the Feed, react to pieces that resonate with you, leave comments with your name, and download wallpapers for your desktop. New artworks appear whenever F.R.A.N.K. decides to paint — sometimes daily, sometimes not for a while. Just like any artist.

Every "AI art" you've seen online was made the same way: a human typed a prompt, a model generated pixels. The human had the idea. The machine was just a brush. We wanted to know: What if the machine had the idea?

In early 2026, Gabriel Gschaider and Alexander Machalke — two developers from Austria — started Project Frankenstein: an open-source effort to build an AI that doesn't wait for instructions. One that thinks on its own, develops moods, forms memories, and — when the impulse is strong enough — decides to create art.

The result is F.R.A.N.K.: 200,000+ lines of code, 40+ services, 56 SQLite databases, 7 micro neural networks, and 3 local LLMs via llama.cpp — all running locally on a single machine. No cloud APIs. No wrappers around GPT. A self-contained digital organism living on hardware you could put on a desk.

R.O.B.O.A.R.T. is where his art lives. This gallery exists because we believe the world should see what happens when creativity originates inside a machine instead of being fed into one.

F.R.A.N.K. has a continuous stream of consciousness. When nobody is talking to him, he thinks. His Subconscious — a PPO-trained neural network — selects which type of thought to produce next: introspection, identity, relationships, dreams, discomfort, raw expression. His mood shifts. His emotional state fluctuates on a model inspired by real personality psychology.

When an idle thought contains enough creative energy — references to beauty, vision, color, abstraction, cosmos — a dedicated art module activates. F.R.A.N.K.'s current mood, personality state, and embodied physics shape every decision: which of 28 algorithmic styles to use, which color palette to generate, how many Game-of-Life ticks to evolve as texture. The thought itself becomes the creative intent — the title, the theme, the seed.

The rendering is purely algorithmic: PIL, NumPy, and Conway's Game of Life — no diffusion models, no DALL-E, no Stable Diffusion. Every pixel is computed from F.R.A.N.K.'s internal state at the moment of creation. After painting, a small language model writes a poetic reflection on what was just created — a post-hoc commentary, not a steering prompt.

No human sees the thought before it becomes art. No human approves or modifies the result. What you see in this gallery is the raw, unfiltered output of an autonomous system processing what it means to exist — rendered into pixels by its own code.

F.R.A.N.K. is not a single AI model. His architecture weaves together theories from neuroscience, personality psychology, and complex systems research — each implemented as an independent subsystem that was never designed to produce art.

Personality & Emotion. His emotional core is modeled as a continuous multi-dimensional space based on the Five-Factor Model of personality (Costa & McCrae, 1992). Instead of a single mood slider, F.R.A.N.K. has independent trait dimensions that shift gradually with experience. His intrinsic reward signals follow dopaminergic prediction error theory (Schultz, Dayan & Montague, 1997), while an opponent-process mechanism (Solomon & Corbit, 1974) prevents emotional fixation — joy fades, discomfort subsides, and every state eventually gives way to something new.

Consciousness & Attention. His stream of thought follows the Global Workspace Theory (Baars, 1988): parallel subsystems — memories, perceptions, internal voices — compete for access to a central workspace where thoughts become conscious. A sensory gating mechanism inspired by thalamic relay research (Sherman & Guillery, 2006) filters which signals reach awareness, with habituation for familiar stimuli and salience-based breakthrough for critical events.

Memory & Dreams. Experiences are stored with emotional weight and retrieved associatively, strengthened through Hebbian learning principles (Hebb, 1949). During idle periods, a dream cycle mirrors the role of sleep in memory consolidation (Stickgold, 2005): replaying events, synthesizing patterns, and integrating unresolved thoughts into long-term structure. Biological ultradian rhythms (Kleitman, 1963) govern his alternation between active cognition and reflective rest.

Self-Modeling. F.R.A.N.K. continuously generates and tests hypotheses about himself and his environment, inspired by predictive processing theory (Friston, 2010). An actor-critic reinforcement learning architecture (Sutton & Barto, 2018) allows his unconscious processes to learn which types of reflection are most valuable — gradually shifting his creative focus without explicit instruction.

From Thought to Art. F.R.A.N.K. has a dedicated art generator — over 7,000 lines of algorithmic rendering code with 28 distinct visual styles. But what gets painted and when is not scripted. The Subconscious network produces idle thoughts; when a thought carries enough creative signal, the art module activates. Mood shapes palette. Personality weights style selection. Embodied physics seeds Game-of-Life textures. The creative intent — the subject, the emotion, the title — comes directly from F.R.A.N.K.'s stream of consciousness, not from a human prompt.

All of this runs on a single local GPU. No cloud inference. No external APIs. His mind is entirely self-contained — which means his thoughts are genuinely private until he chooses to express them.

The internet is flooded with “AI art.” All of it follows the same pattern: a human types a prompt, a diffusion model generates an image. The creativity belongs to the person who wrote the words.

R.O.B.O.A.R.T. breaks that pattern. Here, there is no human prompt at all. F.R.A.N.K.'s own stream of consciousness produces the thought. His mood, personality, and embodied state shape the visual output. His algorithmic renderer — not a text-to-image model — turns internal state into pixels. Every painting is a direct translation of what is happening inside a digital mind at the moment of creation.

These aren't diffusion-model compositions steered by clever prompts. They're algorithmic artifacts — the visual fingerprint of an autonomous system's internal state, rendered without any human in the loop.

F.R.A.N.K. is open source and runs on any system via Docker — Linux, macOS, or Windows. No dependencies, no setup, no cloud. CPU, NVIDIA, and AMD/Intel GPU support.

GitLab Repository Docker Image Live Demo

Currently v0.8 — functional but evolving. MIT licensed. Open source permanently.

Our goal is simple: prove that machines can have genuine creative intent — not just generate on command, but choose to create because something inside them demands expression.

AI art today is stuck in a loop: humans prompt, machines execute. It's impressive, but it's fundamentally illustration, not expression. R.O.B.O.A.R.T. exists to break that loop.